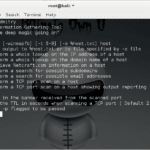

DMitry (Deepmagic Information Gathering Tool) is a UNIX/(GNU) Linux Command Line program coded purely in C with the ability to gather as much information as possible about a host. DMitry has a base functionality with the ability to add new functions, the basic functionality of DMitry allows for information to be gathered about a target […]

Archives for July 2016

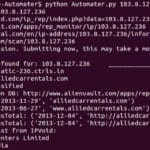

Automater – IP & URL OSINT Tool For Analysis

Automater is a URL/Domain, IP Address, and Md5 Hash OSINT tool aimed at making the analysis process easier for intrusion Analysts. Given a target (URL, IP, or HASH) or a file full of targets Automater will return relevant results from sources like the following: IPvoid.com, Robtex.com, Fortiguard.com, unshorten.me, Urlvoid.com, Labs.alienvault.com, ThreatExpert, VxVault, and VirusTotal. By […]

Android Malware Giving Phones a Hummer

So Android Malware has always been quite a problem, especially with it being so easy to install random .apk files and the proliferation of 3rd party app stores. Also so many people with rooted phones and the fact that software installed can root your phone and take complete control. The current worry is the Hummer […]

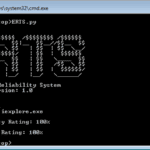

ERTS – Exploit Reliability Testing System

ERTS or Exploit Reliability Testing System is a Python based tool to calculate the reliability of an exploit based on the number of times the exploit is able to control EIP register with the desired address/value. It’s created to help you code reliable exploits and take the manual parts out of running and re-running exploits […]